How to transfer data: Difference between revisions

No edit summary |

(Added information on transferring from CHGI) |

||

| Line 3: | Line 3: | ||

|title=Data Transfer Nodes | |title=Data Transfer Nodes | ||

|message= | |message= | ||

For performance and resource reasons, file transfers should be performed on the data transfer node arc-dtn.ucalgary.ca rather than on the the ARC login node. Since the ARC DTN has the same shares as ARC, any files you transfer to the DTN will also be available on ARC. | |||

}} | }} | ||

== Command-line | == Transferring Data from CHGI == | ||

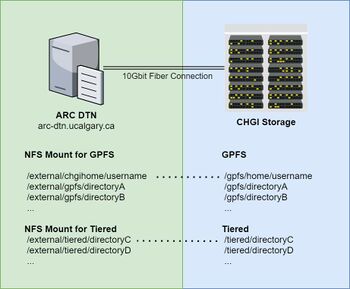

[[File:ARCDTN-CHGI-Mapping.jpeg|350px|thumb|right|ARC-DTN and CHGI NFS mounts]] | |||

Due to the large datasets that are currently stored at the Center for Health Genomics and Informatics (CHGI), we have set up a dedicated 10Gbit fibre connection between the ARC DTN and CHGI to help users quickly transfer files as part of the [[CHGI Transition]]. | |||

Users needing to migrate their data from the CHGI to ARC are able to do so through the read-only NFS mounts that have been set up on the ARC DTN node. The NFS mounts provides a read-only view of the CHGI filesystems and will automatically take advantage of the dedicated 10Gbit fibre connection. Access to the filesystem is restricted to authorized CHGI group members. | |||

Most filesystems at CHGI have been made available under the <code>/external</code> mount point on ARC DTN. Please refer to the following table to help determine your filesystem path on ARC-DTN. | |||

{| class="wikitable" | |||

! ARC DTN | |||

! CHGI | |||

|- | |||

| /external/chgihome || /gpfs/home | |||

|- | |||

| /external/gpfs/achri_data || /gpfs/achri_data | |||

|- | |||

| /external/gpfs/achri_galaxy || /gpfs/achri_galaxy | |||

|- | |||

| /external/gpfs/cbousman || /gpfs/cbousman | |||

|- | |||

| /external/gpfs/charb_data || /gpfs/charb_data | |||

|- | |||

| /external/gpfs/common || /gpfs/common | |||

|- | |||

| /external/gpfs/ebg_data || /gpfs/ebg_data | |||

|- | |||

| /external/gpfs/ebg_gmb || /gpfs/ebg_gmb | |||

|- | |||

| /external/gpfs/ebg_projects || /gpfs/ebg_projects | |||

|- | |||

| /external/gpfs/ebg_web || /gpfs/ebg_web | |||

|- | |||

| /external/gpfs/ebg_work || /gpfs/ebg_work | |||

|- | |||

| /external/gpfs/gallo || /gpfs/gallo | |||

|- | |||

| /external/gpfs/qlong || /gpfs/qlong | |||

|- | |||

| /external/gpfs/snyder_irida || /gpfs/snyder_irida | |||

|- | |||

| /external/gpfs/snyder_work || /gpfs/snyder_work | |||

|- | |||

| /external/gpfs/vetmed_data || /gpfs/vetmed_data | |||

|- | |||

| /external/gpfs/vetmed_stage || /gpfs/vetmed_stage | |||

|- | |||

| /external/tiered/achri_data || /tiered/achri_data | |||

|- | |||

| /external/tiered/chgi_data || /tiered/chgi_data | |||

|- | |||

| /external/tiered/ebg_mic || /tiered/ebg_mic | |||

|- | |||

| /external/tiered/ewang_scratch || /tiered/ewang_scratch | |||

|- | |||

| /external/tiered/ewang || /tiered/ewang | |||

|- | |||

| /external/tiered/jwasmuth || /tiered/jwasmuth | |||

|- | |||

| /external/tiered/kkurek || /tiered/kkurek | |||

|- | |||

| /external/tiered/morph || /tiered/morph | |||

|- | |||

| /external/tiered/mtgraovac || /tiered/mtgraovac | |||

|- | |||

| /external/tiered/parnold || /tiered/parnold | |||

|- | |||

| /external/tiered/robbins || /tiered/robbins | |||

|- | |||

| /external/tiered/smorrissy || /tiered/smorrissy | |||

|- | |||

| /external/tiered/snyder_data || /tiered/snyder_data | |||

|} | |||

To get started with your data migration: | |||

# Log in to the ARC DTN via SSH at arc-dtn.ucalgary.ca. | |||

# Ensure that you have read permissions to the files you are interested. Verify this by navigating to the filesystem based on the mapping shown above and try listing or reading your files. | |||

#* If you have read permissions, you may initiate a file transfer using screen and rsync described in the following section below. | |||

#* If you do not have read permission or have any difficulties, please contact us at support@hpc.ucalgary.ca. | |||

== Transferring Large Datasets == | |||

=== Using screen and rsync === | |||

If you want to transfer a large amount of data from a remote Unix system to ARC you can use '''rsync'' to handle the transfer. | |||

However, you will have to keep your SSH session from your workstation alive during the entire transfer. | |||

Very often this is not convenient or not feasible. | |||

To overcome this one can run the '''rsync''' transfer inside a '''screen''' virtual session on ARC. | |||

'''screen''' creates an SSH session local to ARC and allows for reconnection from SSH sessions from your workstation. | |||

To initialize | |||

<pre> | |||

# Login to ARC | |||

$ ssh username@arc.ucalgary.ca | |||

# Start a screen session | |||

$ screen | |||

# While in the new screen session, start the transfer with rsync. | |||

$ rsync -axv ext_user@external.system:path/to/remote/data . | |||

# Disconnect from the screen session with the hotkey 'Ctrl-a d' | |||

# You may now disconnect from ARC or close the lid of you laptop or turn off the computer. | |||

</pre> | |||

To check if the transfer has been finished. | |||

<pre> | |||

# Login to ARC | |||

$ ssh username@arc.ucalgary.ca | |||

# Reconnect to the screen session | |||

$ screen -r | |||

# If the transfer has been finished close the screen session. | |||

$ exit | |||

# If the transfer is still running, disconnect from the screen session with the hotkey 'Ctrl-a d' | |||

</pre> | |||

=== Very large files === | |||

If the files are large and the transfer speed is low the transfer may fail before the file has been transferred. | |||

'''rsync''' may not be of help here, as it will not restart the file transfer (have not tested recently). | |||

The solution may be to split the large file into smaller chunks, transfer them using rsync and then join them on the remote system (ARC for example): | |||

<pre> | |||

# Large file is 506MB in this example. | |||

$ ls -l t.bin | |||

-rw-r--r-- 1 drozmano drozmano 530308481 Jun 8 11:06 t.bin | |||

# split the file: | |||

$ split -b 100M t.bin t.bin_chunk. | |||

# Check the chunks. | |||

$ ls -l t.bin_chunk.* | |||

-rw-r--r-- 1 drozmano drozmano 104857600 Jun 8 11:09 t.bin_chunk.aa | |||

-rw-r--r-- 1 drozmano drozmano 104857600 Jun 8 11:09 t.bin_chunk.ab | |||

-rw-r--r-- 1 drozmano drozmano 104857600 Jun 8 11:09 t.bin_chunk.ac | |||

-rw-r--r-- 1 drozmano drozmano 104857600 Jun 8 11:09 t.bin_chunk.ad | |||

-rw-r--r-- 1 drozmano drozmano 104857600 Jun 8 11:09 t.bin_chunk.ae | |||

-rw-r--r-- 1 drozmano drozmano 6020481 Jun 8 11:09 t.bin_chunk.af | |||

# Transfer the files: | |||

$ rsync -axv t.bin_chunks.* username@arc.ucalgary.ca: | |||

</pre> | |||

Then login to ARC and join the files: | |||

<pre> | |||

$ cat t.bin_chunk.* > t.bin | |||

$ ls -l | |||

-rw-r--r-- 1 drozmano drozmano 530308481 Jun 8 11:06 t.bin | |||

</pre> | |||

Success. | |||

== File Transfer Tools == | |||

=== Command-line based === | |||

You may use the following command-line file transfer utilities on Linux, MacOS, and Windows. File transfers using these methods require your computer to be on the University of Calgary campus network or via the University of Calgary IT General VPN. | You may use the following command-line file transfer utilities on Linux, MacOS, and Windows. File transfers using these methods require your computer to be on the University of Calgary campus network or via the University of Calgary IT General VPN. | ||

If you are working on a Windows computer, you will need to install these utilities separately as they are not installed by default. Newer versions of Windows 10 (1903 and up) have '''SSH''' built-in as part of the '''openssh''' package. However, you may be better off using one of the [[#GUI File Transfer]] tools listed in the following section. | If you are working on a Windows computer, you will need to install these utilities separately as they are not installed by default. Newer versions of Windows 10 (1903 and up) have '''SSH''' built-in as part of the '''openssh''' package. However, you may be better off using one of the [[#GUI File Transfer]] tools listed in the following section. | ||

=== <code>scp</code>: Secure Copy === | ==== <code>scp</code>: Secure Copy ==== | ||

<code>scp</code> is a secure and encrypted method of transferring files between machines via SSH. It is available on Linux and Mac computers by default and can be installed on Windows by installing the OpenSSH package. | <code>scp</code> is a secure and encrypted method of transferring files between machines via SSH. It is available on Linux and Mac computers by default and can be installed on Windows by installing the OpenSSH package. | ||

| Line 24: | Line 179: | ||

You may see all the available options with <code>scp</code> by viewing the [http://man7.org/linux/man-pages/man1/scp.1.html man page]. | You may see all the available options with <code>scp</code> by viewing the [http://man7.org/linux/man-pages/man1/scp.1.html man page]. | ||

==== Example Usage ==== | ===== Example Usage ===== | ||

Common operations are given below. On your desktop, to: | Common operations are given below. On your desktop, to: | ||

* Transfer a single file (eg. <code>data.dat</code>) to ARC: <syntaxhighlight lang="bash"> | * Transfer a single file (eg. <code>data.dat</code>) to ARC: <syntaxhighlight lang="bash"> | ||

| Line 36: | Line 191: | ||

</syntaxhighlight> | </syntaxhighlight> | ||

=== <code>rsync</code> === | ==== <code>rsync</code> ==== | ||

<code>rsync</code> is a utility for transferring and synchronizing files efficiently. The efficiency for its file synchronization is achieved by its delta-transfer algorithm, which reduces the amount of data sent over the network by sending only the differences between the source files and the existing files in the destination. | <code>rsync</code> is a utility for transferring and synchronizing files efficiently. The efficiency for its file synchronization is achieved by its delta-transfer algorithm, which reduces the amount of data sent over the network by sending only the differences between the source files and the existing files in the destination. | ||

| Line 50: | Line 205: | ||

You may see all the available options with <code>rsync</code> by viewing the [http://man7.org/linux/man-pages/man1/rsync.1.html man page]. | You may see all the available options with <code>rsync</code> by viewing the [http://man7.org/linux/man-pages/man1/rsync.1.html man page]. | ||

==== Example Usage ==== | ===== Example Usage ===== | ||

Common operations are given below. On your desktop, to: | Common operations are given below. On your desktop, to: | ||

| Line 73: | Line 228: | ||

</syntaxhighlight> | </syntaxhighlight> | ||

=== <code>sftp</code>: secure file transfer protocol === | ==== <code>sftp</code>: secure file transfer protocol ==== | ||

* Manual page on-line: http://man7.org/linux/man-pages/man1/sftp.1.html | * Manual page on-line: http://man7.org/linux/man-pages/man1/sftp.1.html | ||

| Line 87: | Line 242: | ||

Commands are case insensitive. | Commands are case insensitive. | ||

=== <code>rclone</code>: rsync for cloud storage === | ==== <code>rclone</code>: rsync for cloud storage ==== | ||

'''Rclone''' is a command line program to sync files and directories to and from a number of on-line storage services. | '''Rclone''' is a command line program to sync files and directories to and from a number of on-line storage services. | ||

* https://rclone.org/ | * https://rclone.org/ | ||

== | === Graphical based === | ||

=== FileZilla === | ==== FileZilla ==== | ||

FileZilla is a free cross-platform file transfer program that can transfer files via sFTP. | FileZilla is a free cross-platform file transfer program that can transfer files via sFTP. | ||

https://filezilla-project.org/download.php?type=client (Note: Installer may bundle ads and unwanted software. Be careful when clicking through.) | https://filezilla-project.org/download.php?type=client (Note: Installer may bundle ads and unwanted software. Be careful when clicking through.) | ||

=== MobaXterm (Windows) === | ==== MobaXterm (Windows) ==== | ||

'''MobaXterm''' is the recommended tool for remote access and data transfer in '''Windows''' OSes. | '''MobaXterm''' is the recommended tool for remote access and data transfer in '''Windows''' OSes. | ||

| Line 108: | Line 263: | ||

* Website: https://mobaxterm.mobatek.net/ | * Website: https://mobaxterm.mobatek.net/ | ||

=== WinSCP (Windows) === | ==== WinSCP (Windows) ==== | ||

WinSCP is a free Windows file transfer tool. | WinSCP is a free Windows file transfer tool. | ||

https://winscp.net/eng/index.php | https://winscp.net/eng/index.php | ||

[[Category:Guides]] | [[Category:Guides]] | ||

Revision as of 20:39, 28 October 2020

|

Data Transfer Nodes For performance and resource reasons, file transfers should be performed on the data transfer node arc-dtn.ucalgary.ca rather than on the the ARC login node. Since the ARC DTN has the same shares as ARC, any files you transfer to the DTN will also be available on ARC.

|

Transferring Data from CHGI

Due to the large datasets that are currently stored at the Center for Health Genomics and Informatics (CHGI), we have set up a dedicated 10Gbit fibre connection between the ARC DTN and CHGI to help users quickly transfer files as part of the CHGI Transition.

Users needing to migrate their data from the CHGI to ARC are able to do so through the read-only NFS mounts that have been set up on the ARC DTN node. The NFS mounts provides a read-only view of the CHGI filesystems and will automatically take advantage of the dedicated 10Gbit fibre connection. Access to the filesystem is restricted to authorized CHGI group members.

Most filesystems at CHGI have been made available under the /external mount point on ARC DTN. Please refer to the following table to help determine your filesystem path on ARC-DTN.

| ARC DTN | CHGI |

|---|---|

| /external/chgihome | /gpfs/home |

| /external/gpfs/achri_data | /gpfs/achri_data |

| /external/gpfs/achri_galaxy | /gpfs/achri_galaxy |

| /external/gpfs/cbousman | /gpfs/cbousman |

| /external/gpfs/charb_data | /gpfs/charb_data |

| /external/gpfs/common | /gpfs/common |

| /external/gpfs/ebg_data | /gpfs/ebg_data |

| /external/gpfs/ebg_gmb | /gpfs/ebg_gmb |

| /external/gpfs/ebg_projects | /gpfs/ebg_projects |

| /external/gpfs/ebg_web | /gpfs/ebg_web |

| /external/gpfs/ebg_work | /gpfs/ebg_work |

| /external/gpfs/gallo | /gpfs/gallo |

| /external/gpfs/qlong | /gpfs/qlong |

| /external/gpfs/snyder_irida | /gpfs/snyder_irida |

| /external/gpfs/snyder_work | /gpfs/snyder_work |

| /external/gpfs/vetmed_data | /gpfs/vetmed_data |

| /external/gpfs/vetmed_stage | /gpfs/vetmed_stage |

| /external/tiered/achri_data | /tiered/achri_data |

| /external/tiered/chgi_data | /tiered/chgi_data |

| /external/tiered/ebg_mic | /tiered/ebg_mic |

| /external/tiered/ewang_scratch | /tiered/ewang_scratch |

| /external/tiered/ewang | /tiered/ewang |

| /external/tiered/jwasmuth | /tiered/jwasmuth |

| /external/tiered/kkurek | /tiered/kkurek |

| /external/tiered/morph | /tiered/morph |

| /external/tiered/mtgraovac | /tiered/mtgraovac |

| /external/tiered/parnold | /tiered/parnold |

| /external/tiered/robbins | /tiered/robbins |

| /external/tiered/smorrissy | /tiered/smorrissy |

| /external/tiered/snyder_data | /tiered/snyder_data |

To get started with your data migration:

- Log in to the ARC DTN via SSH at arc-dtn.ucalgary.ca.

- Ensure that you have read permissions to the files you are interested. Verify this by navigating to the filesystem based on the mapping shown above and try listing or reading your files.

- If you have read permissions, you may initiate a file transfer using screen and rsync described in the following section below.

- If you do not have read permission or have any difficulties, please contact us at support@hpc.ucalgary.ca.

Transferring Large Datasets

Using screen and rsync

If you want to transfer a large amount of data from a remote Unix system to ARC you can use 'rsync to handle the transfer. However, you will have to keep your SSH session from your workstation alive during the entire transfer. Very often this is not convenient or not feasible.

To overcome this one can run the rsync transfer inside a screen virtual session on ARC. screen creates an SSH session local to ARC and allows for reconnection from SSH sessions from your workstation.

To initialize

# Login to ARC $ ssh username@arc.ucalgary.ca # Start a screen session $ screen # While in the new screen session, start the transfer with rsync. $ rsync -axv ext_user@external.system:path/to/remote/data . # Disconnect from the screen session with the hotkey 'Ctrl-a d' # You may now disconnect from ARC or close the lid of you laptop or turn off the computer.

To check if the transfer has been finished.

# Login to ARC $ ssh username@arc.ucalgary.ca # Reconnect to the screen session $ screen -r # If the transfer has been finished close the screen session. $ exit # If the transfer is still running, disconnect from the screen session with the hotkey 'Ctrl-a d'

Very large files

If the files are large and the transfer speed is low the transfer may fail before the file has been transferred. rsync may not be of help here, as it will not restart the file transfer (have not tested recently).

The solution may be to split the large file into smaller chunks, transfer them using rsync and then join them on the remote system (ARC for example):

# Large file is 506MB in this example. $ ls -l t.bin -rw-r--r-- 1 drozmano drozmano 530308481 Jun 8 11:06 t.bin # split the file: $ split -b 100M t.bin t.bin_chunk. # Check the chunks. $ ls -l t.bin_chunk.* -rw-r--r-- 1 drozmano drozmano 104857600 Jun 8 11:09 t.bin_chunk.aa -rw-r--r-- 1 drozmano drozmano 104857600 Jun 8 11:09 t.bin_chunk.ab -rw-r--r-- 1 drozmano drozmano 104857600 Jun 8 11:09 t.bin_chunk.ac -rw-r--r-- 1 drozmano drozmano 104857600 Jun 8 11:09 t.bin_chunk.ad -rw-r--r-- 1 drozmano drozmano 104857600 Jun 8 11:09 t.bin_chunk.ae -rw-r--r-- 1 drozmano drozmano 6020481 Jun 8 11:09 t.bin_chunk.af # Transfer the files: $ rsync -axv t.bin_chunks.* username@arc.ucalgary.ca:

Then login to ARC and join the files:

$ cat t.bin_chunk.* > t.bin $ ls -l -rw-r--r-- 1 drozmano drozmano 530308481 Jun 8 11:06 t.bin

Success.

File Transfer Tools

Command-line based

You may use the following command-line file transfer utilities on Linux, MacOS, and Windows. File transfers using these methods require your computer to be on the University of Calgary campus network or via the University of Calgary IT General VPN.

If you are working on a Windows computer, you will need to install these utilities separately as they are not installed by default. Newer versions of Windows 10 (1903 and up) have SSH built-in as part of the openssh package. However, you may be better off using one of the #GUI File Transfer tools listed in the following section.

scp: Secure Copy

scp is a secure and encrypted method of transferring files between machines via SSH. It is available on Linux and Mac computers by default and can be installed on Windows by installing the OpenSSH package.

The general format for the command is:

$ scp [options] source destination

- The

sourceanddestinationfields can be a local file / directory or a remote one. - The local location is a normal Unix path, absolute or relative and

- The remote location has a format

username@remote.system.name:file/path. - The remote relative file path is relative to the home directory of the

usernameon the remote system.

You may see all the available options with scp by viewing the man page.

Example Usage

Common operations are given below. On your desktop, to:

- Transfer a single file (eg.

data.dat) to ARC:desktop$ scp data.dat username@arc-dtn.ucalgary.ca:/desired/destination

- Transfer all files ending with

.datto ARC:desktop$ scp *.dat username@arc-dtn.ucalgary.ca:/desired/destination

- To transfer an entire directory to ARC:

desktop$ scp -r my_data_directory/ username@arc-dtn.ucalgary.ca:/desired/destination

rsync

rsync is a utility for transferring and synchronizing files efficiently. The efficiency for its file synchronization is achieved by its delta-transfer algorithm, which reduces the amount of data sent over the network by sending only the differences between the source files and the existing files in the destination.

rsync can be used to copy files and directories locally on a system or between multiple computers via SSH. Unlike scp. Because it is designed to synchronize two locations, partial transfers can be restarted by re-running rsync without losing progress. Resuming a partial transfer is not possible with scp.

The general format for the command is similar to scp:

$ rsync [options] source destination

- The

sourceanddestinationfields can be a local file / directory or a remote one. - The local location is a normal Unix path, absolute or relative and

- The remote location has a format

username@remote.system.name:file/path. - The remote relative file path is relative to the home directory of the

usernameon the remote system.

You may see all the available options with rsync by viewing the man page.

Example Usage

Common operations are given below. On your desktop, to:

- Upload a single file (eg.

data.dat) from your workstation to your ARC:desktop$ rsync -v data.dat username@arc-dtn.ucalgary.ca:/desired/destination

- Upload all files matching a wildcard (eg. ending in

*.dat):$ rsync -v *.dat username@arc-dtn.ucalgary.ca:/desired/destination

- Upload an entire directory (eg.

my_datato~/projects/project2):$ rsync -axv my_data username@arc-dtn.ucalgary.ca:~projects/project2/

- Upload more than one directory:

desktop$ rsync -axv my_data1 my_data2 my_data3 username@arc-dtn.ucalgary.ca:/desired/destination

- Download one file (eg.

output.dat) from ARC to the current directory on your workstation:## Note the '.' at the end of the command which references the current working directory on your computer desktop$ rsync -v username@arc-dtn.ucalgary.ca:projects/project1/output.dat . - Download one directory (eg.

outputs) from ARC to the current directory on your workstation:desktop$ rsync -axv username@arc-dtn.ucalgary.ca:projects/project1/outputs .

sftp: secure file transfer protocol

- Manual page on-line: http://man7.org/linux/man-pages/man1/sftp.1.html

sftp is a file transfer program, similar to ftp,

which performs all operations over an encrypted ssh transport.

It may also use many features of ssh, such as public key authentication and compression.

sftp has an interactive mode,

in which sftp understands a set of commands similar to those of ftp.

Commands are case insensitive.

rclone: rsync for cloud storage

Rclone is a command line program to sync files and directories to and from a number of on-line storage services.

Graphical based

FileZilla

FileZilla is a free cross-platform file transfer program that can transfer files via sFTP.

https://filezilla-project.org/download.php?type=client (Note: Installer may bundle ads and unwanted software. Be careful when clicking through.)

MobaXterm (Windows)

MobaXterm is the recommended tool for remote access and data transfer in Windows OSes.

MobaXterm is a one-stop solution for most remote access work on a compute cluster or a Unix / Linux server.

It provides many Unix like utilities for Windows including an SSH client and X11 graphics server. It provides a graphical interface for data transfer operations.

- Website: https://mobaxterm.mobatek.net/

WinSCP (Windows)

WinSCP is a free Windows file transfer tool.